- Record: found

- Abstract: found

- Article: found

Birds, primates, and spoken language origins: behavioral phenotypes and neurobiological substrates

Read this article at

Abstract

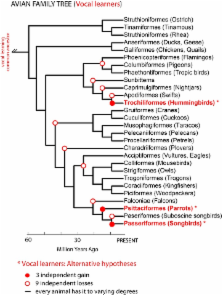

Vocal learners such as humans and songbirds can learn to produce elaborate patterns of structurally organized vocalizations, whereas many other vertebrates such as non-human primates and most other bird groups either cannot or do so to a very limited degree. To explain the similarities among humans and vocal-learning birds and the differences with other species, various theories have been proposed. One set of theories are motor theories, which underscore the role of the motor system as an evolutionary substrate for vocal production learning. For instance, the motor theory of speech and song perception proposes enhanced auditory perceptual learning of speech in humans and song in birds, which suggests a considerable level of neurobiological specialization. Another, a motor theory of vocal learning origin, proposes that the brain pathways that control the learning and production of song and speech were derived from adjacent motor brain pathways. Another set of theories are cognitive theories, which address the interface between cognition and the auditory-vocal domains to support language learning in humans. Here we critically review the behavioral and neurobiological evidence for parallels and differences between the so-called vocal learners and vocal non-learners in the context of motor and cognitive theories. In doing so, we note that behaviorally vocal-production learning abilities are more distributed than categorical, as are the auditory-learning abilities of animals. We propose testable hypotheses on the extent of the specializations and cross-species correspondences suggested by motor and cognitive theories. We believe that determining how spoken language evolved is likely to become clearer with concerted efforts in testing comparative data from many non-human animal species.

Related collections

Most cited references132

- Record: found

- Abstract: found

- Article: not found

Repetition and the brain: neural models of stimulus-specific effects.

- Record: found

- Abstract: found

- Article: not found

Statistical learning by 8-month-old infants.

- Record: found

- Abstract: found

- Article: not found